Defining Systems (Thinking in Systems → AI Systems)

Systems thinking explains outcomes by structure, not events. A system is defined by elements, interconnections (especially information/physical flows), and its real purpose (inferred from behavior). Stocks (accumulations) change through flows (rates), and feedback loops shape dynamics: balancing loops stabilize, reinforcing loops amplify; delays often create oscillation and overshoot. Many failures repeat as structural “traps” (commons, escalation, wrong goals), so the best interventions move up the leverage ladder—from tweaking numbers to redesigning information flows, rules, goals, and paradigms.

Claim

If you want to understand why a system produces its outcomes—and how to change them—you must look at structure, not just events: stocks and flows, feedback loops, delays, plus the higher-leverage layers of information, rules, goals, and paradigms. Most interventions fail because they push on low-leverage knobs (numbers) or ignore loops that push back.

What a system is (and why “visible parts” mislead)

A system is not a pile of parts. It is an interconnected set of elements that is coherently organized to produce something. Every system has (1) elements, (2) interconnections, and (3) a function/purpose.

Elements are the pieces (people, objects, rules, intangibles). They’re the most visible layer, so attention naturally gravitates there.

Interconnections are how elements relate—especially information flows and/or physical flows that coordinate decisions and actions. In practice, the system is often more about the “and” than the parts.

Purpose is what the system actually produces. You infer it from behavior over time, not from what it declares. The least obvious part—purpose—is often the strongest determinant of behavior.

Stocks, flows, and the engine: feedback loops

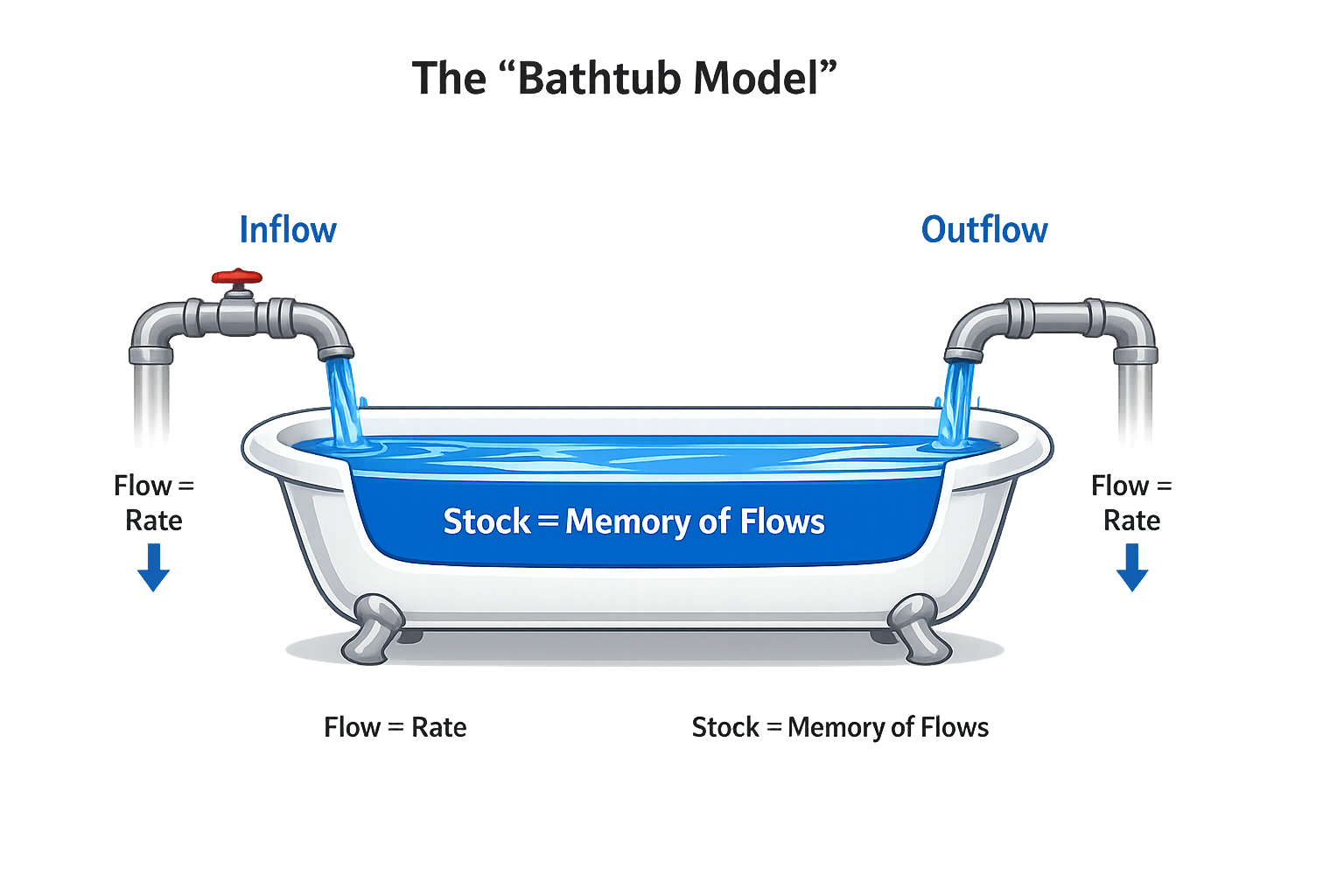

A stock is an accumulation you can measure at a point in time: inventory, money, population, bugs. A stock is the system’s memory of past flows.

A flow is a rate that changes a stock: inflow/outflow per unit time (purchases/sales, births/deaths, bugs created/bugs resolved). A stock rises if inflow > outflow, falls if outflow > inflow, and holds steady if they’re equal.

A feedback loop exists when the level of a stock influences decisions that adjust flows, which then change that stock again. That closed chain is how many systems “run themselves.”

There are two fundamental loop types:

- Balancing (B): discrepancy-reducing, goal-seeking, stabilizing (thermostat behavior).

- Reinforcing (R): amplifying, self-multiplying, snowball dynamics (runaway growth or collapse).

Mermaid diagram (to test rendering)

A brief visit to the “systems zoo”

Different domains behave similarly because they share the same feedback-loop structure.

One-stock systems

A thermostat is one stock (temperature) regulated by a balancing loop trying to reach a setpoint, while a competing balancing loop (heat leaking outdoors) pulls it away. With competing balancing loops, the controlled variable can settle short of the target unless the goal compensates for ongoing leakage.

Reinforcing + balancing on one stock (population/capital) is simple but powerful: births/investment reinforce growth; deaths/depreciation balance it. What you see depends on loop dominance:

- Reinforcing dominates → exponential growth.

- Balancing dominates → decline.

- Equal strength → leveling off.

Delays inside balancing loops (inventory management) tend to produce oscillations. With perception, response, and delivery delays, a step increase in demand can cause over-ordering, then under-ordering, and repeat. Counterintuitively, reacting faster can make instability worse; sometimes damping requires slowing the reaction.

Two-stock systems: growth with constraints (“limits to growth”)

Any real growing system in a finite environment has:

- at least one reinforcing loop driving growth, and

- at least one balancing loop that eventually constrains it.

Nonrenewables are stock-limited: you can extract faster, but you shorten the lifespan. Renewables are flow-limited: sustainable harvest must not exceed regeneration; if you exceed it long enough, you can destroy regenerative capacity and trigger collapse.

Why systems work so well

Many systems absorb shocks and restore function because they exhibit:

Resilience: the ability to survive, adapt, and recover in a variable environment. It often comes from multiple redundant feedback loops operating at different time scales. Resilience has limits, and it’s frequently traded away for short-term efficiency and stability.

Self-organization: the ability to create more complex structure from within—learning, diversifying, evolving. It requires freedom and experimentation (and some disorder), which makes it unpredictable and therefore often suppressed by institutions that want uniformity.

Hierarchy: subsystems nested within larger systems. Hierarchy works because subsystems self-regulate locally, higher levels coordinate across subsystems, and the system reduces information load with dense links within and looser links between. It fails via:

- Suboptimization: subsystems optimize their own goal at the expense of the whole.

- Overcontrol: excessive central control crushes autonomy and adaptability.

Why systems surprise us

Systems surprise us less because they’re mysterious and more because our mental models are incomplete.

We confuse events with understanding. Systems thinking pushes you to look at behavior over time and then identify the structure producing it.

We think linearly in a nonlinear world. Thresholds and saturation can change the relative strength of loops and cause shifting dominance.

Model boundaries are mental. Boundaries that are too narrow ignore crucial stocks and flows; boundaries that are too wide create noise and paralysis. The right boundary depends on the question and time horizon.

Delays are everywhere. Long delays mean acting only when problems are obvious is often too late; delays are a major source of overshoot and oscillation.

Bounded rationality matters. People act reasonably given limited, delayed, biased information and local incentives. Swapping people rarely changes outcomes; redesigning information, incentives, goals, rules, and constraints does.

System traps (and the way out)

Recurring structural patterns create recurring failures. The leverage move is to change structure—goals, loops, information flows, rules, constraints.

-

Policy resistance (“fixes that fail”): multiple actors pull the same stock toward different goals.

Way out: stop escalating ineffective force; align goals through an overarching shared objective.

-

Tragedy of the commons: individual gain from exploiting a shared, erodable resource; feedback is weak/delayed.

Way out: restore feedback via norms/education, privatization where possible, or regulation with monitoring and enforcement.

-

Drift to low performance (“eroding goals”): standards adjust downward as performance slips, reinforcing decline.

Way out: keep standards absolute or anchored to best historical performance.

-

Escalation: goals are set relative to an opponent, creating arms-race reinforcing loops.

Way out: refuse to compete (de-escalate) or negotiate caps with enforcement.

-

Success to the successful: winners gain capacity to win again, concentrating resources.

Way out: diversify, cap concentration, level the playing field, design rewards that don’t amplify advantage.

-

Shifting the burden (dependence): symptomatic fixes erode internal capacity, increasing dependency.

Way out: avoid symptom-only fixes; strengthen self-maintenance and then exit.

-

Rule beating: people comply with the letter, not the spirit, distorting outcomes.

Way out: treat it as feedback; redesign rules so creativity serves the purpose.

-

Seeking the wrong goal: optimize an indicator that doesn’t match real welfare.

Way out: redefine goals/indicators to track actual system welfare.

Leverage points: where small changes can create big shifts

People mostly push on low-leverage points (numbers), often in the wrong direction. Real leverage is higher: information, rules, goals, paradigms.

From low → high leverage:

- Numbers (parameters: taxes, subsidies, standards)

- Buffers (size of stabilizing stocks vs flows)

- Stock-and-flow structures (the plumbing)

- Delays (time lags vs rate of change)

- Balancing feedback loops (strength of stabilizing controls)

- Reinforcing feedback loops (gain of compounding loops)

- Information flows (who knows what, when)

- Rules (incentives, constraints, constitutions)

- Self-organization (ability to evolve structure)

- Goals (purpose of the system)

- Paradigms (mindset generating goals/rules/structures)

- Transcending paradigms

The practical punchline is to move effort upward: from tweaking numbers → to redesigning feedback and information → to changing rules and goals → to challenging paradigms.

Conclusion

To change outcomes, stop arguing with events and start diagnosing structure. Identify stocks, flows, loops, and delays. Then choose leverage: if you want meaningful change, prioritize information, rules, goals, and paradigms over parameter tweaks.